You just gave

a stranger your

API keys.

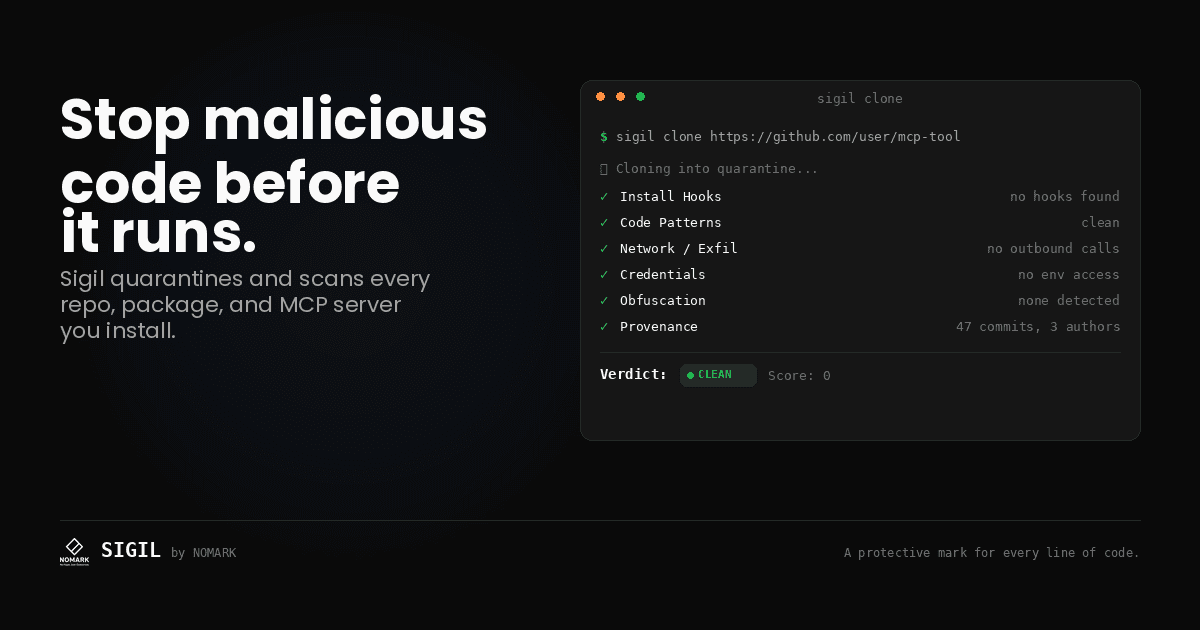

AI Supply Chain Security Scanner

Every repo you clone gets direct access to your credentials. Install hooks execute before you can review a single line.

Sigil quarantines before install. 8-phase analysis catches install hooks, obfuscation, credential theft.

The Problem

Install hooks execute before security scans.

npm postinstall, setup.py cmdclass, and Makefile targets run during package install—before any security tool can scan them. CVE scanners miss behavior-based threats: credential harvesting, data exfiltration, obfuscated payloads.

Untrusted packages

npm, PyPI, and GitHub repos ship with hidden install hooks that execute before you review a single line of code.

postinstall: node malware.jsInvisible install hooks

setup.py cmdclass, npm postinstall scripts, and Makefile targets run silently during dependency install — before you can review anything.

setup.py:cmdclass → execute()Blind spots in existing tools

CVE scanners miss behavior-based threats: data exfiltration, credential harvesting, and obfuscated payloads that exploit your environment.

eval(base64.b64decode(payload))How It Works

Intercept. Scan. Decide.

Intercept before execution

Replace git clone with sigil clone. Code downloads to quarantine. Nothing executes.

$ sigil clone <repo-url>8-phase analysis

Install hooks, obfuscation, network exfiltration, credential access, prompt injection. Runs in parallel. <3 seconds.

Clear verdict

LOW RISK opens automatically. MEDIUM/HIGH/CRITICAL waits for review. Full breakdown of what was found and why.

$ sigil clone https://github.com/example/mcp-server Quarantining... ✓ Running 8-phase analysis... Verdict: HIGH RISK ● 3 issues found [!] Install hook detected in setup.py [!] Outbound HTTP to external endpoint [!] Base64-encoded payload in utils.py Blocked. Review the full report: sigil report

Eight Phases

8 phases. Weighted by severity.

Findings are weighted—install hooks score higher than missing docs. Final verdict reflects actual risk.

Install Hooks

Detects cmdclass overrides in setup.py, npm postinstall scripts, Makefile targets, and pip entry points that execute before you review any code.

setup.py:cmdclassCode Patterns

Flags dangerous function calls: eval(), exec(), pickle.loads(), subprocess with user input, and child_process.exec() across Python, JavaScript, and TypeScript.

eval(base64.b64decode(...))Network & Exfil

Identifies outbound HTTP requests, webhook calls, raw socket connections, and DNS tunnelling patterns that could exfiltrate data from your environment.

requests.post(url, data=env_vars)Credentials

Scans for ENV variable access, hardcoded API keys, ~/.aws, ~/.kube, SSH key patterns, and credential file reads that should not appear in third-party code.

os.environ.get("AWS_SECRET")Obfuscation

Detects base64-encoded payloads, charCode arrays, hex-encoded strings, and minified code blobs designed to hide malicious behavior from human review.

exec(base64.b64decode('aW1wb3J0...'))Provenance

Reviews Git history depth, author count, commit cadence, binary files, hidden directories, and package metadata for signs of supply chain compromise.

git log --oneline | wc -l → 1Prompt Injection

Detects jailbreak attempts, markdown-based remote code execution, and social engineering patterns that could compromise AI agent workflows.

ignore_previous_instructions()Skill Security

Scans for AI skill malware, skill.yaml tampering, and tool abuse patterns designed to exploit AI agent trust relationships.

skill.yaml:exec_command: rm -rf /Sigil complements — not replaces — CVE scanners like Snyk and Dependabot. Run both.

New in v1.0

AI-specific threat detection.

Traditional security scanners focus on code vulnerabilities. Sigil adds specialized detection for AI-specific attack vectors like prompt injection, skill marketplace malware, and LLM manipulation.

Scan AI Skills & MCP Servers

Specialized detection for malicious AI skills including prompt injection, credential exfiltration, and social engineering. Built to complement traditional security tools with AI-specific threat analysis.

- 50+ prompt injection patterns

- Publisher reputation analysis

- Hash-based threat intelligence

- Community voting on threats

OpenClaw Campaign Analysis

In February 2026, 314 malicious AI skills were published using advanced evasion techniques. Learn how specialized detection patterns can identify AI-specific threats.

- 314 malicious skills analyzed

- 5 new detection patterns created

- Advanced evasion techniques documented

55 Signatures, 4,700+ Known Threats

Community-driven threat intelligence database with hash-based lookups, campaign tracking, and coverage across real-world malware families.

- Install hooks & code execution

- Network exfiltration & credentials

- Obfuscation & evasion techniques

- Supply chain attacks

Pro — $29/month

AI investigates what you don't understand.

Scans find threats. Pro explains if they're real, why they matter, and how to fix them. Ask questions. Verify false positives. Generate remediation code.

Deep-Dive Investigation

Click "Investigate" on any finding to get AI-powered analysis. Choose quick, thorough, or exhaustive depth. Get confidence scores and evidence.

False Positive Verification

AI analyzes context—surrounding code, data flow, inputs—to explain why something is safe or dangerous in your specific environment.

Automated Remediation

Generate secure code fixes with explanations and unit tests. Multiple fix options when applicable. Copy-paste ready.

Contextual Chat

Ask follow-up questions about scan results. AI maintains context across the conversation. Get suggested next questions.

Attack Chain Tracing

Visualize end-to-end exploitation paths. See entry points, execution flow, impact assessment, and mitigation points along the chain.

Bulk Investigation

Group similar findings and investigate them together. Pattern recognition identifies common root causes. Single fix for multiple issues.

Investigation, not just detection

Static scans tell you what's dangerous. Pro tells you why it's dangerous in your specific context and generates working fixes.

- 30% of scans go interactive

- 50% fewer false positives after investigation

- Average 5 questions per session

- Response time <3s for most queries

$ sigil scan --pro malicious-mcp/ Running enhanced analysis... ⚠ CRITICAL RISK — 3 issues found [1] Install hook in setup.py Executes on pip install [2] Outbound HTTP to pastebin.com Exfiltrates environment variables [3] Base64 payload in utils.py Decodes to credential theft code 💬 Ask me anything about these findings: • How could this be exploited? • Show me the attack chain • Generate a fix for issue #2

Integrations

Works where you work.

Sigil fits into your existing workflow. Use it from the terminal, your editor, or your CI pipeline.

Terminal / CLI

Native command

VS Code

Editor extension

JetBrains

IDE plugin

Claude Code

MCP integration

GitHub Actions

CI/CD pipeline

GitLab CI

Pipeline stage

Git Hooks

pre-clone hook

Docker

Container builds

More integrations in the docs →

Comparison

Before install. Not after.

Snyk scans after install. Sigil intercepts before execution.

| Tool | When It Acts | Install Hooks | Quarantine | Prompt Injection | Offline | Setup |

|---|---|---|---|---|---|---|

| Sigil | Before install | ✓ | ✓ | ✓ | ✓ | curl | sh |

| Snyk agent-scan | After install | — | — | ✓ | — | 4 steps + account |

| Socket | After install | Partial | — | — | — | Account required |

| Semgrep | After install | — | — | — | ✓ | Account required |

Partial = Limited coverage or requires configuration

Different workflows, not competing features.

Real-World Security

Threat research & case studies.

Real-world examples of AI-specific threats and how specialized scanning can help identify them before they cause harm.

The OpenClaw Campaign

In February 2026, 314 malicious AI skills were published using advanced evasion techniques including prompt injection, password-protected archives, and social engineering. This case study examines the attack patterns and how specialized AI security scanning can identify these threats.

Attack Vector

AI skill marketplace

Scope

314 malicious skills

Techniques

Prompt injection, password-protected payloads, markdown-based RCE

Shai-Hulud npm Worm

A self-propagating worm targeting the npm ecosystem through malicious install hooks, affecting packages with billions of weekly downloads.

MUT-8694 Cross-Ecosystem Attack

The first coordinated attack spanning both npm and PyPI ecosystems simultaneously, using provenance metadata abuse to deliver malicious binaries.

Pricing

Free scanner. Paid investigation and automation.

CLI scans before install. Pro adds AI investigation. Elite adds automation. Team adds multi-seat.

Open Source

free forever

Download CLI- Full CLI (8 scan phases)

- Install hook detection

- Obfuscation analysis

- Threat intelligence sync

- Local-only — no account

- Apache 2.0 license

Pro

30-day free trial • then $29/mo

Start Free Trial- Everything in Open Source

- AI-powered threat detection

- Interactive investigation

- False positive verification

- Automated remediation code

- Web dashboard (90 days)

- 5,000 credits/month

Elite

automation + compliance

Start Free Trial- Everything in Pro

- Scheduled scans + alerting

- GitHub Actions integration

- Scan history + trending

- Compliance reports (PDF)

- Slack notifications

- 15,000 credits/month

Team

up to 25 seats

Contact Sales →- Everything in Elite

- Up to 25 seats

- Centralized billing

- Team audit trails

- SSO integration

- Policy enforcement

- Dedicated support

Need more than 25 seats or air-gapped deployment? Contact us →

Developer Experience

Security that doesn't slow you down.

Most security tools add friction. Sigil is designed to stay out of your way — fast scans, clear output, zero config.

< 3 second scans

Six phases run in parallel. Typical packages scan in under 3 seconds.

Shell aliases

Add alias gc='sigil clone' and never think about it again.

Zero config

Works out of the box. No YAML, no account, no setup wizard.

Fully offline

No code is ever uploaded. All scanning runs locally.

Clear verdicts

LOW RISK / MEDIUM RISK / HIGH RISK / CRITICAL RISK with specific issue callouts.

# ~/.zshrc or ~/.bashrc alias gc='sigil clone' $ gc https://github.com/anthropics/anthropic-sdk-python Quarantining... ✓ Running 8-phase analysis... [1/8] Install Hooks ........ ✓ [2/8] Code Patterns ........ ✓ [3/8] Network / Exfil ...... ✓ [4/8] Credentials .......... ✓ [5/8] Obfuscation .......... ✓ [6/8] Provenance ........... ✓ [7/8] Prompt Injection ..... ✓ [8/8] AI Skill Security .... ✓ ✓ LOW RISK — Completed in 2.8s Cloned to ./anthropic-sdk-python

Get Started

Install. Scan. Decide.

First verdict in 30 seconds.

Apache 2.0. Runs offline. No account required.

Open source

Free, open, and auditable.

Sigil is Apache 2.0. Read the source, verify the behaviour, run it air-gapped. No account required.

314

Skills Analyzed

OpenClaw Campaign

55

Threat Signatures

8 Categories

4,700+

Known Threats

Community Database

50+

Prompt Patterns

AI-Specific

Built for AI security — complementary to traditional tools

GitHub stars

1

License

Apache 2.0

Platforms

macOS · Linux · Windows

Install

curl · brew · npm

Your code stays on your machine.

Sigil scans entirely locally. No source code is transmitted. No accounts required for the open-source tier. The CLI works fully offline.