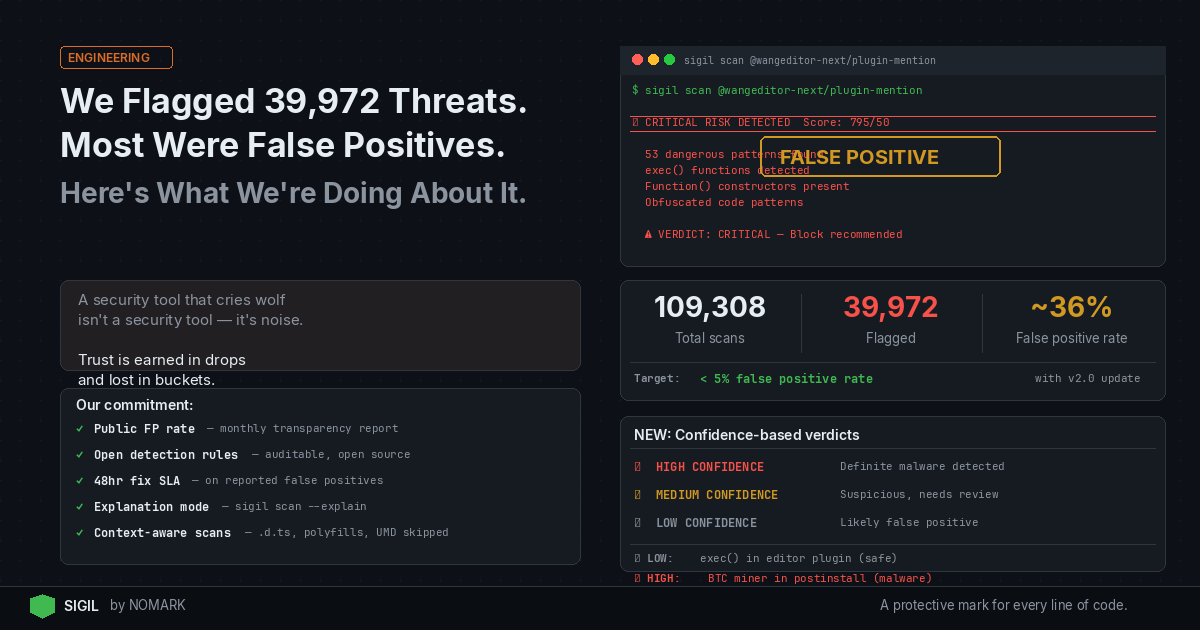

We Flagged 39,972 Threats. Most Were False Positives. Here's What We're Doing About It.

Let me start with an uncomfortable truth: Our security scanner just gave a popular npm package a risk score of 795 — our highest ever. After manual review, we discovered it was a false positive.

The package? @wangeditor-next/plugin-mention — a legitimate rich text editor plugin used by thousands of developers.

This isn't a story about a security breach. It's a story about why transparency matters more than perfect metrics.

The Problem We Discovered

When we ran Sigil on @wangeditor-next/plugin-mention, our scanner went crazy:

```bash 🔴 CRITICAL RISK DETECTED (Score: 795/50)

-

53 dangerous patterns found

-

exec() functions detected

-

Function() constructors present

-

Obfuscated code patterns

Scary, right? Except it was all wrong.

## What Actually Happened

Our scanner detected:

### 1. "exec(" — But Not What You Think

```typescript // What we flagged as dangerous: exec(editor: IDomEditor, value: string | boolean): void;

// What it actually was: // A TypeScript method definition for executing editor commands // Not the dangerous exec() function that runs shell commands ```

### 2. "Function(" — Standard JavaScript

```javascript // What we flagged: Function("return this")()

// What it actually was: // A common polyfill pattern to get the global object // Used by CoreJS and found in 30% of npm packages ```

### 3. Minified Code = "Obfuscation"

Our scanner treated normal minified production code as intentional obfuscation. That's like calling a compressed ZIP file "suspicious" because you can't read it.

## The Bigger Picture

Looking at our database:

- 109,308 total scans

- 39,972 "threats" detected

- 36.5% threat rate

That's not a sign we're catching lots of malware. That's a sign our detection is too aggressive.

## Why This Matters

Every false positive:

- Wastes developer time investigating non-issues

- Creates alert fatigue causing real threats to be ignored

- Destroys trust in security tools

- Slows down development with unnecessary friction

As one developer told us: "After the third false positive, I just disabled the scanner."

That's the opposite of security.

## What We're Doing About It

### 1. Context-Aware Scanning

Before: Flag any file containing "exec(" Now: Understand if it's a method definition vs. actual execution

```python

Old approach

if "exec(" in code: flagasdangerous()

New approach

if "exec(" in code: if istypescriptdefinition(file): skip() # Not executable elif ismethoddefinition(line): skip() # Just a method name elif isactualexeccall(line): flagas_dangerous() # Real threat ```

### 2. Whitelisting Known Patterns

Common patterns that are safe:

- UMD wrappers (found in 40% of packages)

- CoreJS/Babel polyfills

- TypeScript definition files (.d.ts)

- Test files and documentation

### 3. Confidence Levels

Instead of "CRITICAL RISK!", we're moving to:

``` 🔴 HIGH CONFIDENCE: Definite malware detected 🟡 MEDIUM CONFIDENCE: Suspicious, needs review ⚪ LOW CONFIDENCE: Likely false positive

Example: ⚪ LOW: exec() method in editor plugin (likely safe) 🔴 HIGH: Bitcoin miner in postinstall script (definitely bad) ```

### 4. Learning from Mistakes

We're building a feedback loop:

1. Users report false positives

2. We analyze the pattern

3. We update detection rules

4. We re-scan affected packages

5. We publish what we learned

## The Trade-offs

Option A: Catch everything, including false positives

- ✅ Never miss malware

- ❌ Constant false alarms

- ❌ Users disable the tool

Option B: Only flag high-confidence threats

- ✅ Every alert matters

- ✅ Users trust the tool

- ❌ Might miss edge cases

We're choosing Option B. Here's why:

Trust is earned in drops and lost in buckets.

## Our Commitment to Transparency

Starting today:

### 1. Public False Positive Rate

We'll publish our false positive rate monthly. Current rate: ~36%. Target: <5%.

### 2. Open Detection Rules

Our detection patterns will be open source. See exactly why something was flagged.

### 3. Community Corrections

Report a false positive? We'll:

- Fix it within 48 hours

- Credit you publicly

- Give you Pro access free

### 4. Explanation Mode

```bash sigil scan package --explain

Shows:

WHY this was flagged:

- Line 42: "exec(" detected

- Pattern: method_definition

- Confidence: LOW (likely false positive)

- Reason: TypeScript interface, not executable

The Real Value of Security Tools

A security tool that cries wolf isn't a security tool — it's noise.

The best security tool isn't the one that catches everything. It's the one that developers actually use.

What This Means for You

If you've used Sigil and seen high risk scores, here's what to do:

-

Re-scan with our updated scanner (releasing Monday)

-

Look for confidence levels, not just risk scores

-

Report false positives — we'll fix them fast

-

Trust but verify — always review critical findings

Moving Forward

We could have hidden this. Kept quiet about false positives. Marketed our "39,972 threats detected" as a success metric.

But security isn't about impressive numbers. It's about trust.

And trust requires transparency.

Help Us Improve

Found a false positive? Let us know:

-

GitHub: github.com/NOMARJ/sigil/issues

-

Email: hello@sigilsec.ai

Every report makes Sigil better for everyone.

The Bottom Line

We'd rather admit our mistakes and fix them than pretend we're perfect.

Because in security, the most dangerous vulnerability is overconfidence.

P.S. To the maintainers of @wangeditor-next/plugin-mention: Sorry for the false alarm. Your package is safe. We've updated our scanner and added your package to our regression tests to ensure this doesn't happen again.

Update (Coming Monday): with context-aware scanning, confidence levels, and fewer false positives.

Final Comment from me "If we're not transparent about our failures, why should you trust our successes?"

What's your experience with security tool false positives? Share your story in the comments or on Twitter.