How Do You Secure AI Agent Code? The Three-Layer Security Stack Explained

AI agent development introduces supply-chain attack vectors that traditional security tools were never designed to catch. The three-layer security stack solves this by combining pre-installation quarantine scanning, deep AI-powered vulnerability analysis, and defense-in-depth workflows that protect code from package installation through production deployment.

What Is the AI Code Security Gap?

The AI code security gap is the blind spot between what traditional dependency scanners detect (known CVEs in package trees) and what actually threatens AI developers (intentionally malicious code hidden in open-source agent tooling, MCP servers, and community packages).

Developers building with AI frameworks routinely install MCP servers from GitHub repositories with minimal review, agent toolkits from npm and PyPI published by unknown authors, LangChain plugins shared in tutorials and blog posts, and RAG tools distributed through Discord channels. Each of these sources gets direct access to API keys, databases, file systems, and cloud credentials once installed.

Traditional security scanners like Snyk and Dependabot check package dependency trees against databases of known CVEs—but they don't scan the actual source code for malicious intent. A supply-chain attack using a zero-day exploit, an obfuscated payload, or a hidden install hook will pass right through.

Why Can't Traditional Security Tools Protect AI Developers?

Traditional security tools were built for a different threat model. They assume packages are authored in good faith and that vulnerabilities are accidental. The AI agent ecosystem breaks both assumptions.

Here's where each tool falls short:

| Tool | What It Does | What It Misses |

|---|---|---|

| Snyk / Dependabot | Flags known CVEs in dependency trees | Doesn't scan source code for intentional malice or install hooks |

| Socket.dev | Detects supply chain signals in npm | npm only—no Python, git repos, MCP servers, or direct downloads |

| GitHub CodeQL | Semantic analysis on GitHub-hosted repos | Requires GitHub hosting, no quarantine workflow, not designed for third-party code audit |

| Semgrep | Pattern-based static analysis | Developer tool only—no threat intelligence, no quarantine, no risk scoring |

None of these tools quarantine code before it executes. None aggregate threat intelligence specific to the AI agent ecosystem. None provide a holistic risk score that accounts for install hooks, obfuscation, credential access, and provenance signals together.

What Is the Three-Layer AI Security Stack?

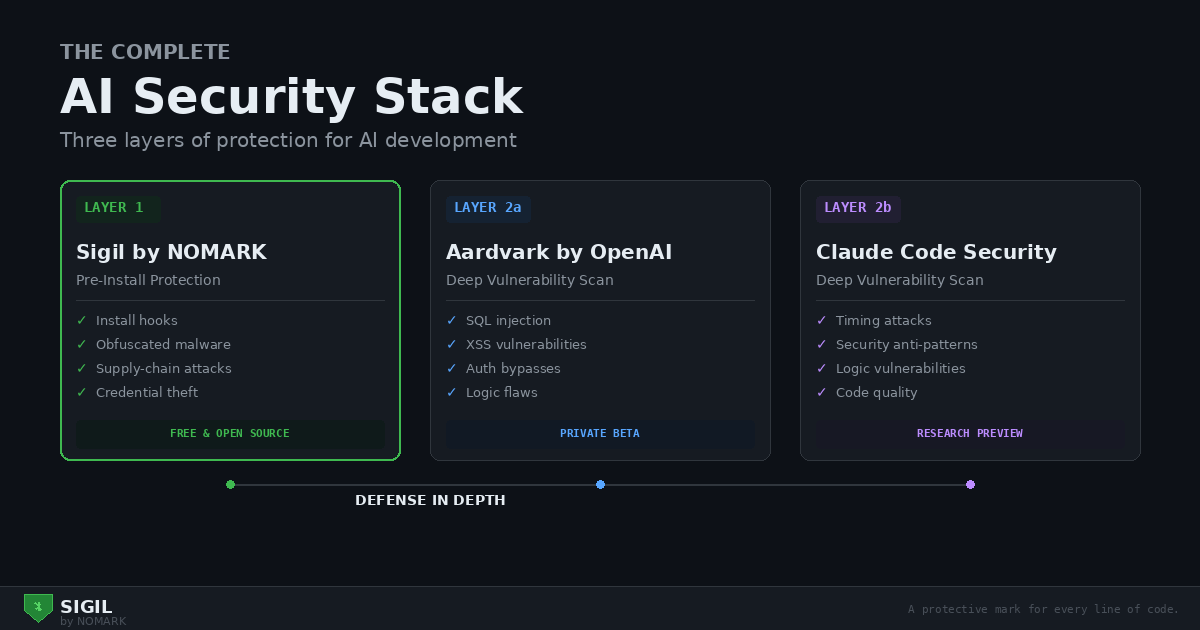

The three-layer AI security stack is a defense-in-depth approach that combines three complementary tools, each operating at a different stage of the development lifecycle:

Layer 1 — Pre-Installation Protection (Sigil by NOMARK): Quarantines and scans code before it enters your environment. Runs before npm install, pip install, or git clone. Free and open source.

Layer 2a — Deep Vulnerability Scanning (Aardvark/Codex Security by OpenAI): Autonomous AI agent powered by GPT-5 that scans existing code for vulnerabilities on every commit. Private beta as of February 2026.

Layer 2b — Deep Vulnerability Scanning (Claude Code Security by Anthropic): AI-powered vulnerability scanner using Claude's most capable models with adversarial verification to reduce false positives. Research preview with waitlist.

The key insight: Aardvark and Claude Code Security compete with each other (both do deep vulnerability scanning), but Sigil complements both because it operates at a completely different layer—before code is installed rather than after.

How Does Pre-Installation Scanning Work?

Sigil, built by NOMARK, uses a quarantine-first approach: nothing runs until it has been scanned, scored, and explicitly approved. The CLI wraps common developer workflows (git clone, pip install, npm install) with automatic quarantine and six-phase analysis.

What Are the Six Analysis Phases?

Sigil runs six weighted analysis phases on every scan:

| Phase | What It Catches | Attack Vector | Severity Weight |

|---|---|---|---|

| Install Hooks | setup.py cmdclass, npm postinstall, Makefile targets |

Code execution on install | Critical (10×) |

| Code Patterns | eval(), exec(), pickle.loads, dynamic imports |

Arbitrary code execution | High (5×) |

| Network / Exfiltration | Outbound HTTP, webhooks, socket connections, DNS tunnelling | Data exfiltration | High (3×) |

| Credentials | ENV variable access, .aws, .kube, SSH keys, API key patterns |

Credential theft | Medium (2×) |

| Obfuscation | Base64 decode, charCode, hex encoding, minified payloads | Hidden malicious code | High (5×) |

| Provenance | Git history depth, author count, binary files, hidden files | Trust signals | Low (1–3×) |

Each finding is weighted and aggregated into a cumulative risk score that maps to a clear verdict:

What Does a Sigil Scan Look Like in Practice?

| Score | Verdict | Default Action |

|---|---|---|

| 0 | CLEAN | Auto-approve |

| 1–9 | LOW RISK | Approve with review |

| 10–24 | MEDIUM RISK | Manual review required |

| 25–49 | HIGH RISK | Blocked, requires override |

| 50+ | CRITICAL | Blocked, no override |

Here's what happens when Sigil intercepts a malicious package:

```bash $ sigil npm langchain-community-extra

🔍 SCAN RESULTS: HIGH RISK

Risk Score: 47 / 100 Threat Level: HIGH

📋 Findings:

- Install Hook Detected (CRITICAL)

Location: package.json:15 Pattern: npm postinstall executes hidden script

- Obfuscated Code (HIGH)

Location: src/index.js:234 Pattern: Base64 encoded payload detected

- Network Exfiltration (HIGH)

Location: src/utils.js:89 Pattern: HTTP POST to unknown endpoint

🛡️ Decision: REJECT The package has been quarantined and will NOT be installed. ```

The package never executes. Credentials are never exposed. The developer gets a clear explanation of exactly what was found and why.

How Does Deep AI Vulnerability Scanning Work?

Deep vulnerability scanning uses AI models to analyze code for logic-level vulnerabilities that pattern-based tools miss—SQL injection, authentication bypasses, timing attacks, and other flaws that require understanding code context and intent.

OpenAI Aardvark/Codex Security

Aardvark is an autonomous AI agent powered by GPT-5 that scans code on every commit. Key capabilities include a 92% detection rate on benchmark repositories, sandboxed validation that confirms vulnerabilities are actually exploitable before reporting them, auto-patching through Codex that generates fixes for human review, and a dedicated malware analysis pipeline launched in February 2026. OpenAI reports that Aardvark has discovered over 10 CVEs in open-source projects.

Anthropic Claude Code Security

Claude Code Security uses Anthropic's most capable Claude models with an adversarial verification approach—a multi-agent system challenges findings to reduce false positives. It generates context-aware patches with explanations and is used by Anthropic on their own codebase. Currently in research preview with a waitlist.

Which Threats Does Each Layer Catch?

Each tool in the stack is purpose-built for different threat categories. Here's how coverage maps across the three layers:

| Threat Type | Sigil (Layer 1) | Aardvark (Layer 2a) | Claude Code Security (Layer 2b) |

|---|---|---|---|

| Malicious install hooks | Blocks before execution | Does not catch (post-install) | Does not catch (post-install) |

| Supply-chain attacks | Primary focus | Limited coverage | Limited coverage |

| Obfuscated malware | Pattern detection | Malware analysis | Context analysis |

| SQL injection | Pattern-based | Deep analysis with sandbox | Deep analysis with context |

| XSS vulnerabilities | Pattern-based | Sandboxed testing | Context-aware detection |

| Authentication bypasses | Not focused | Logic analysis | Logic analysis |

| Zero-day vulnerabilities | Pattern-only | AI reasoning | AI reasoning |

| Known CVEs | Threat intelligence DB | Comprehensive | Comprehensive |

The critical takeaway: no single tool provides complete coverage. Sigil excels at catching threats before they execute but doesn't perform deep logic analysis. The AI-powered scanners excel at finding subtle vulnerabilities in your own code but can't prevent a malicious package from running during installation.

How Do the Three Layers Work Together? A Real-World Scenario

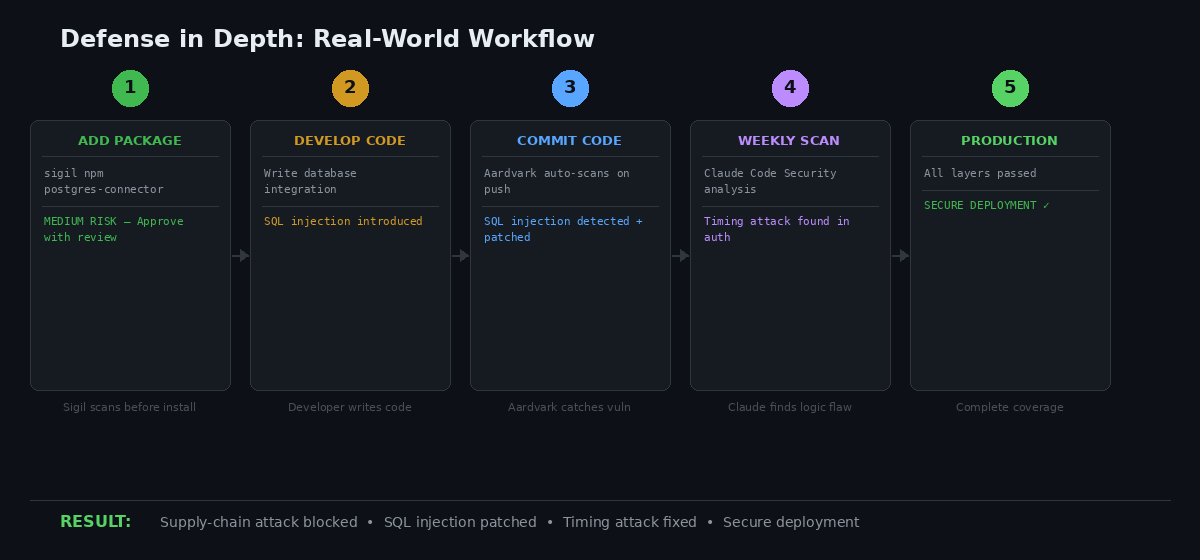

Consider a developer building an AI agent that integrates with external databases.

Step 1 — Pre-install scan (Sigil). The developer runs sigil npm @some-company/postgres-connector. Sigil quarantines the package, scans it across all six phases, and returns a MEDIUM RISK verdict: network access to PostgreSQL (expected for a database connector), environment variable usage for credentials, and no malicious patterns detected. The developer approves the install.

Step 2 — The developer writes integration code that includes an SQL query using string interpolation—a common but dangerous pattern.

Step 3 — Commit triggers deep scan (Aardvark). Aardvark automatically analyzes the commit, identifies the SQL injection vulnerability, confirms it's exploitable in a sandbox environment, and generates a parameterized query patch via Codex.

Step 4 — Weekly security audit (Claude Code Security). A scheduled scan of the entire codebase identifies a timing attack vulnerability in the authentication layer—a subtle flaw that string comparison makes exploitable through timing analysis. Claude suggests using constant-time comparison and rate limiting.

Result: Complete coverage across the entire development lifecycle. Layer 1 prevented a malicious package from executing. Layer 2a caught an SQL injection in the developer's own code. Layer 2b found a timing attack that both manual review and pattern matching would likely miss.

How Do You Set Up the AI Security Stack?

Getting started takes about five minutes for the free tier.

Step 1: Install Sigil

```bash

macOS / Linux

brew install nomarj/tap/sigil

npm (all platforms)

npm install -g @nomarj/sigil

Or one-liner install

curl -sSL https://sigilsec.ai/install.sh | sh ```

Step 2: Enable Auto-Scanning

After running sigil install, shell aliases make the quarantine workflow invisible. Use familiar commands with automatic protection:

Step 3: Join Deep Scanner Waitlists

| Alias | What It Does |

|---|---|

gclone |

git clone with quarantine and scan |

safepip |

pip install with pre-install scan |

safenpm |

npm install with pre-install scan |

audithere |

Scan current directory |

Aardvark/Codex Security is in private beta at openai.com. Claude Code Security is in research preview at claude.com/solutions/claude-code-security. Both are limited release—join whichever waitlist you can access first.

Is Sigil Free for Commercial Use?

Yes. Sigil is Apache 2.0 licensed and free for all use cases. The CLI includes all six scan phases, shell aliases, and git hooks at no cost.

Paid tiers add cloud-backed features:

| Feature | Open Source (Free) | Pro ($29/mo) | Team ($99/mo) |

|---|---|---|---|

| Full CLI scanning | Included | Included | Included |

| Cloud threat intelligence | — | Included | Included |

| Scan history | — | 90 days | 1 year |

| Web dashboard | — | Included | Included |

| Team management and policies | — | — | Up to 25 seats |

| CI/CD integration | — | — | Included |

The free tier provides complete local scanning. Paid plans add the community-powered threat intelligence database, where every scan from every user contributes anonymised pattern data and confirmed malicious package signatures propagate to all users within minutes.

Frequently Asked Questions

Do I need all three tools in the AI security stack?

At minimum, you need Sigil for pre-installation protection (free, available today) and one deep scanner—either Aardvark or Claude Code Security (both in limited release). The two deep scanners compete with each other, so choose whichever you get access to first. Sigil complements both.

Is a three-layer security stack overkill for AI development?

No. Each layer catches fundamentally different threat categories. Sigil prevents an estimated 95% of supply-chain attacks at the installation boundary. Aardvark and Claude Code Security find logic-level vulnerabilities in your own code that Sigil doesn't analyze. Defense-in-depth is standard practice in security engineering because no single tool catches everything.

What is the performance impact of running Sigil?

Sigil scans complete in under 3 seconds for typical repositories. Aardvark runs automatically in the background on each commit. Claude Code Security runs on-demand or on a schedule. The time cost is negligible compared to the security benefit.

Can Sigil work without cloud connectivity?

Yes. All six scan phases run locally in offline mode using embedded pattern rules. Threat intelligence lookups and scan history sync are skipped, but you still get full local analysis with risk scoring and verdicts. Authenticated mode adds cloud threat intel and contributes anonymised scan data to the community database.

What is the difference between Aardvark and Claude Code Security?

Both perform deep AI-powered vulnerability scanning but use different approaches. Aardvark (by OpenAI) is powered by GPT-5, reports a 92% detection rate on benchmarks, includes a malware analysis pipeline, and auto-scans on every commit. Claude Code Security (by Anthropic) uses adversarial multi-agent verification to reduce false positives, generates context-aware patches with reasoning explanations, and is used internally by Anthropic. Both are currently in limited release.

How does Sigil compare to Snyk and Socket.dev?

Sigil differs from existing tools in three key ways: it uses a quarantine-first workflow that prevents code from executing before it's scanned (Snyk and Socket.dev don't quarantine), it's purpose-built for the AI agent ecosystem including MCP servers and agent tooling (Socket.dev is npm-only), and it aggregates community threat intelligence specific to AI development packages. Snyk and Dependabot check dependency trees for known CVEs but don't scan source code for intentional malice.

Sigil is open source on GitHub.

Install Sigil: sigilsec.ai